TMac: Tensor completion by parallel Matrix factorization

Background

Higher-order low-rank tensors with missing values naturally arise in many applications including hyperspectral data recovery, video inpainting, seismic data reconstruction, and so on. These problems can be formulated as low-rank tensor completion (LRTC). Existing methods![^{[1, 2]}](eqs/7925648196134842906-130.png) for LRTC employ matrix nuclear-norm minimization and use the singular value decomposition (SVD) in their algorithms, which become very slow or even not applicable for large-scale problems. To tackle this difficulty, we apply low-rank matrix factorization to each mode unfolding of the tensor in order to enforce low-rankness and update the matrix factors alternatively, which is computationally much cheaper than SVD.

for LRTC employ matrix nuclear-norm minimization and use the singular value decomposition (SVD) in their algorithms, which become very slow or even not applicable for large-scale problems. To tackle this difficulty, we apply low-rank matrix factorization to each mode unfolding of the tensor in order to enforce low-rankness and update the matrix factors alternatively, which is computationally much cheaper than SVD.

Our method

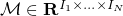

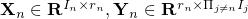

We aim at recovering a low-rank tensor  from partial observations

from partial observations  , where

, where  is the index set of observed entries, and

is the index set of observed entries, and  keeps the entries in

keeps the entries in  and zeros out others. We apply low-rank matrix factorization to each mode unfolding of

and zeros out others. We apply low-rank matrix factorization to each mode unfolding of  by finding matrices

by finding matrices  such that

such that  for

for  , where

, where  is the estimated rank, either fixed or adaptively updated. Introducing one common variable

is the estimated rank, either fixed or adaptively updated. Introducing one common variable  to relate these matrix factorizations, we solve the following model

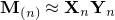

to relate these matrix factorizations, we solve the following model

where  and

and  . In the model,

. In the model,  ,

,  , are weights and satisfy

, are weights and satisfy  .

We use alternating least squares method to solve the model.

.

We use alternating least squares method to solve the model.

Results

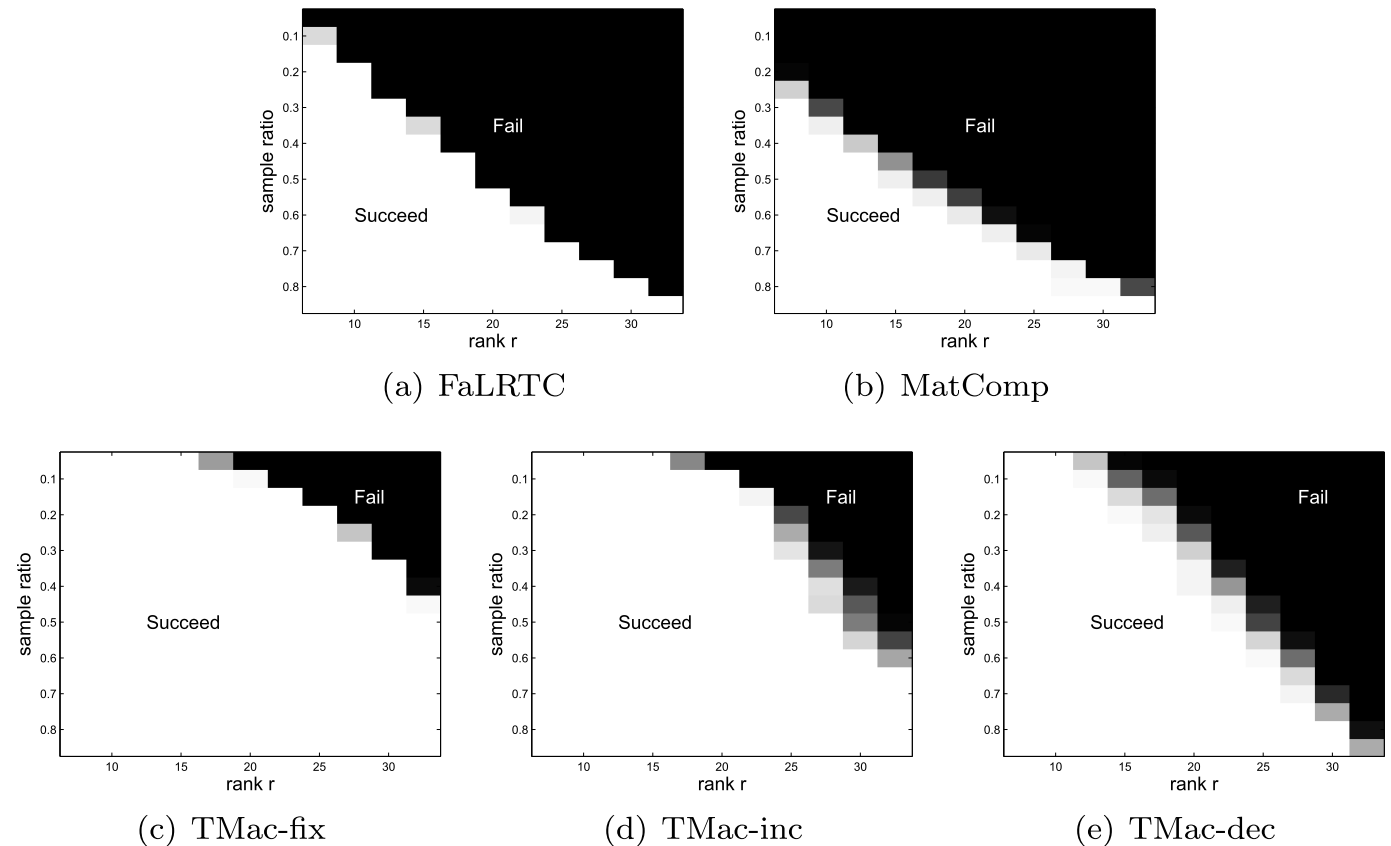

Our model is non-convex jointly with respect to  and

and  . Although a global solution is not guaranteed, we demonstrate by numerical experiments that our algorithm can reliably recover a wide variety of low-rank tensors, such as the following phase transition plots. In the picture, each target tensor

. Although a global solution is not guaranteed, we demonstrate by numerical experiments that our algorithm can reliably recover a wide variety of low-rank tensors, such as the following phase transition plots. In the picture, each target tensor  , where

, where  and

and  have Gaussian random entries. (a) FaLRTC: the tensor completion method in

have Gaussian random entries. (a) FaLRTC: the tensor completion method in ![[2]](eqs/8209412804333245623-130.png) .

(b) MatComp: first reshape the tensor as a matrix and then use the matrix completion solver LMaFit in

.

(b) MatComp: first reshape the tensor as a matrix and then use the matrix completion solver LMaFit in ![[3]](eqs/8209412804332245752-130.png) . (c) TMac-fix: our method with

. (c) TMac-fix: our method with  and

and  fixed to

fixed to  . (d) TMac-inc: our method with

. (d) TMac-inc: our method with  and using rank-increasing strategy starting from

and using rank-increasing strategy starting from  . (e) TMac-dec: our method with

. (e) TMac-dec: our method with and using rank-decreasing strategy starting from

and using rank-decreasing strategy starting from  .

.

The results show that our method performs much better than the other two methods.

|

Matlab code

Citation

Y. Xu, R. Hao, W. Yin, and Z. Su. Parallel matrix factorization for low-rank tensor completion, Inverse Problems and Imaging, 9(2015), 601–624.

References

![[1]](eqs/8209412804330245758-130.png) S. Gandy, B. Recht, and I. Yamada, Tensor completion and low-

S. Gandy, B. Recht, and I. Yamada, Tensor completion and low- -rank tensor recovery via convex optimization, Inverse Problems, 27(2011), p. 025010.

-rank tensor recovery via convex optimization, Inverse Problems, 27(2011), p. 025010.

![[2]](eqs/8209412804333245623-130.png) J. Liu, P. Musialski, P. Wonka, and Jieping Ye, Tensor completion for estimating missing values in visual data, IEEE Transactions on Pattern Analysis and Machine Intelligence, (2013), pp. 208-220.

J. Liu, P. Musialski, P. Wonka, and Jieping Ye, Tensor completion for estimating missing values in visual data, IEEE Transactions on Pattern Analysis and Machine Intelligence, (2013), pp. 208-220.

![[3]](eqs/8209412804332245752-130.png) Z. Wen, W. Yin, and Y. Zhang, Solving a low-rank factorization model for matrix completion by a nonlinear successive over-relaxation algorithm, Mathematical Programming Computation, (2012), pp. 1-29

Z. Wen, W. Yin, and Y. Zhang, Solving a low-rank factorization model for matrix completion by a nonlinear successive over-relaxation algorithm, Mathematical Programming Computation, (2012), pp. 1-29